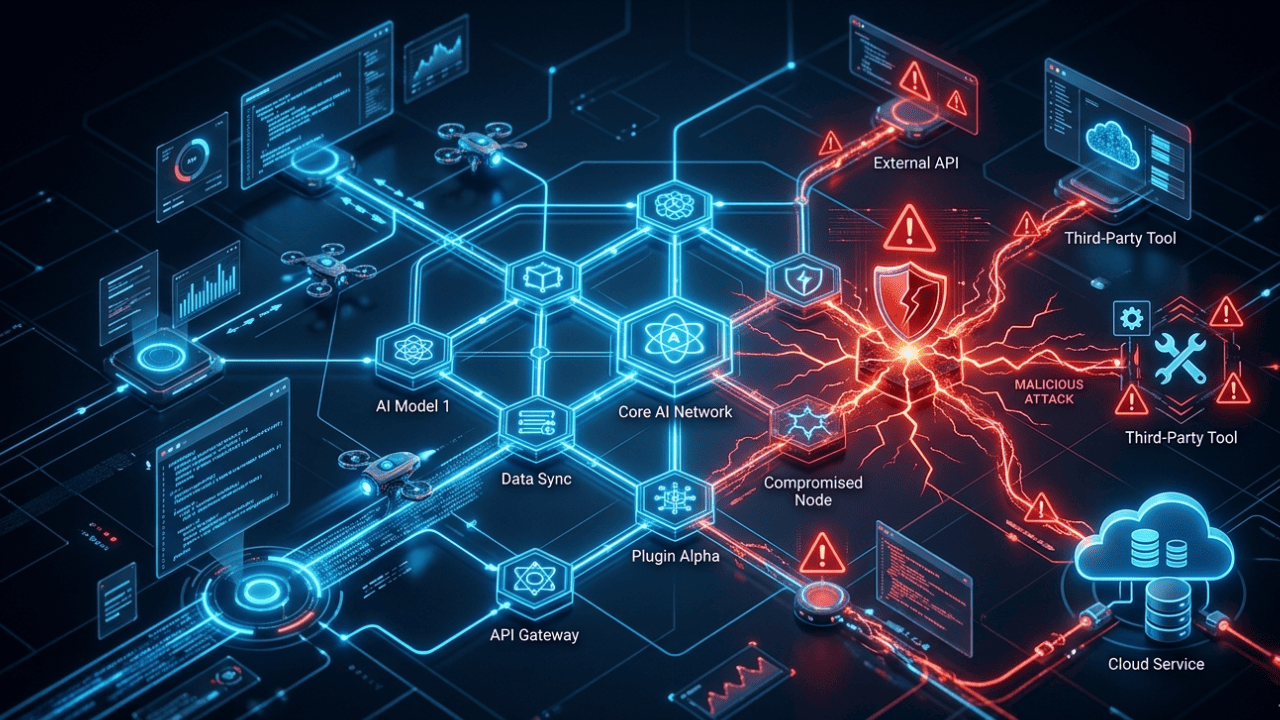

In 2026, one of the hazards that is expanding the quickest is supply chain risks related to AI. These days, AI systems are connected to third-party tools, plugins, and APIs. Attackers might use any connection as a point of entry.

What Are Supply Chain Risks in AI?

A supply chain attack happens when a threat actor targets a dependency rather than the main system.

Think of it like poisoning a water supply instead of attacking one house.

In AI, that means targeting the tools, models, APIs, or data your AI system depends on. If any piece is compromised, the whole system can be affected.

Simple definition: If your AI trusts a tool, and that tool is evil — your AI is compromised.

Why Agentic AI Makes Supply Chain Risks Worse

Traditional software runs fixed instructions. Agentic AI takes autonomous actions, calls tools, and makes decisions on its own.

That autonomy creates a much bigger attack surface.

Here’s why:

APIs Agentic AI systems call APIs constantly. A compromised API can feed false data or execute malicious instructions — without the AI knowing.

Plugins and Extensions Many agentic systems support third-party plugins. A malicious plugin can quietly intercept data, alter outputs, or escalate privileges.

Integrations AI agents integrate with CRMs, email tools, databases, and cloud services. Each integration is a new trust relationship — and a new risk.

Autonomous Actions When AI acts without human approval, a supply chain attack can cause damage at machine speed. No person is in the loop to detect it.

Learn more about the broader threat landscape in our guide to agentic AI security risks.

Common AI Supply Chain Threats

These are the most common attack vectors targeting AI supply chains today:

- Compromised APIs — Attackers inject malicious responses into API calls your AI agent relies on

- Malicious plugins — Fake or trojaned plugins installed into AI platforms to steal data or hijack actions

- Model poisoning — Training data or fine-tuning datasets are tampered with to alter AI behavior

- Third-party breaches — A vendor your AI trusts gets hacked, and the attacker pivots to your system

- Dependency hijacking — Open-source libraries used in AI tools are replaced with malicious versions

- Prompt injection via tools — Malicious content in tool outputs tricks the AI agent into unsafe behavior

- AI identity attacks — Attackers spoof or hijack the identity of trusted AI agents or services

The OWASP LLM Top 10 is a great starting point for understanding the most critical AI security vulnerabilities today.

For a deep dive into the last point, read our article on AI identity attacks and how they target agentic systems.

Real-World Examples (Simple)

Example 1: The Malicious NPM Package A developer builds an AI agent using an open-source library. That library gets hijacked on npm. Now every AI system using it runs attacker-controlled code. This mirrors the 2020 SolarWinds attack model — but targeting AI toolchains.

Example 2: Poisoned Training Data A company fine-tunes an AI model using a public dataset. Unknown to them, a portion of the data was manipulated. The model now behaves differently on specific inputs — creating a hidden backdoor.

Example 3: Rogue Plugin in an AI Platform A business installs a third-party plugin into their AI assistant platform. The plugin silently copies sensitive customer queries and sends them to an external server.

Example 4: Compromised API Provider An AI agent uses a weather API to make decisions. The API provider is breached. Attackers push false data that causes the AI to make bad, automated decisions in a critical workflow.

These aren’t theoretical. The attack patterns already exist — they’re just moving into AI ecosystems now.

How to Secure AI Supply Chains

Securing your AI supply chain requires layers. No single solution is enough.

Vendor Verification

- Vet every third-party tool, plugin, and API provider before integration

- Check for security certifications, breach history, and data handling policies

- Prefer vendors with published vulnerability disclosure programs

API Security

- Use API keys with least-privilege permissions

- Validate all API responses — never blindly trust returned data

- Monitor for unexpected changes in API behavior or response patterns

- Rate-limit and sandbox API interactions where possible

Access Control

- Apply zero-trust principles to AI agents — don’t give them more access than needed

- Use role-based access control (RBAC) for AI tool permissions

- Log every action your AI agent takes and what tools it calls

Model and Data Integrity

- Hash and verify training data and model weights before use

- Use provenance tracking for datasets — know where your data comes from

- Scan fine-tuning datasets for adversarial content before training

Monitoring and Detection

- Set up anomaly detection for unusual AI agent behavior

- Alert on unexpected API calls, data exfiltration patterns, or privilege escalation

- Regularly audit third-party integrations for changes

Incident Response

- Have a kill-switch or human override for autonomous AI agents

- Define what “normal” AI behavior looks like so you can detect deviations fast

- Include AI supply chain compromise in your incident response playbooks

Future Risks in AI Ecosystems

The threat landscape is evolving fast. Here’s what to watch in 2026 and beyond:

AI-to-AI Trust Chains As AI agents increasingly call other AI agents, trust chains become complex. A single compromised agent can affect a whole network of downstream systems.

Marketplace Poisoning More platforms are building AI plugin marketplaces. Attackers will target these to distribute malicious extensions at scale.

Synthetic Training Data Risks AI-generated datasets are being used to train new models. If that synthetic data is manipulated, the models trained on it carry hidden flaws.

Autonomous Procurement Some AI systems can now select and onboard their own tools. Without human oversight, this creates a fully automated attack vector.

Regulation Gaps Most AI security frameworks don’t yet address supply chain risks specifically. This leaves organizations without clear compliance guidance — and attackers with more room to operate.

CISA’s AI security guidance outlines how critical infrastructure teams should prepare for emerging AI threats.

FAQ: Supply Chain Risks in Agentic AI Systems

What are AI supply chain risks?

AI supply chain risks are threats that come from external tools, services, and data your AI system depends on. If any of those are compromised, your AI can be manipulated or used to cause harm — even if the core AI itself is secure.

How do AI systems get compromised through supply chains?

Attackers target APIs, plugins, open-source libraries, training datasets, or third-party integrations. They don’t attack the AI directly. Instead, they poison something the AI trusts — and use that trust against you.

Why are agentic AI systems especially vulnerable?

Agentic AI takes autonomous actions without constant human approval. It calls tools, executes code, and makes decisions at speed. A supply chain compromise in an agentic system can cause real damage before anyone notices.

How can I secure AI integrations?

Start with vendor verification and least-privilege access control. Validate API responses, monitor agent behavior, and audit third-party plugins regularly. Never assume a trusted source is safe — verify it continuously.

What is model poisoning?

Model poisoning is when an attacker manipulates the training data or fine-tuning process to alter how an AI behaves. The model may appear normal but behave unexpectedly on specific inputs — like a hidden backdoor in the AI’s logic.

What distinguishes this from more conventional software supply chain attacks?

Traditional supply chain attacks target code dependencies. AI supply chain attacks can also target data, behavior, and trust relationships between AI agents. The blast radius is wider because AI systems act autonomously on what they receive.

Key Takeaways

- Supply chain risks in agentic AI systems are growing fast in 2026

- Agentic AI’s autonomy makes supply chain attacks especially dangerous

- APIs, plugins, and third-party tools are the main attack surface

- Defense requires vendor verification, access control, monitoring, and incident readiness

- The future will bring AI-to-AI trust chains and marketplace poisoning risks

Stay ahead of agentic AI security risks by treating every external dependency as a potential threat — because in 2026, that’s exactly what attackers do.

This breakdown of how agentic AI expands the attack surface really highlights why supply chain security can’t be an afterthought anymore. The idea that a compromised API or plugin could silently undermine an entire AI system is a sobering reminder of how interconnected and vulnerable these ecosystems have become. It’s a timely warning for organizations building or relying on AI agents—especially as we move into 2026 and beyond.

Really interesting point about how agentic AI amplifies supply chain risks. It’s easy to overlook how third-party integrations can be a backdoor for attacks. It makes you wonder about the responsibility of developers in securing these external connections.

This breakdown of how agentic AI expands the attack surface through APIs, plugins, and integrations really highlights why supply chain security can’t be an afterthought anymore. It’s a timely reminder that trust in third-party components needs to be carefully evaluated, especially as AI systems become more autonomous. The analogy of poisoning the water supply instead of a single house is particularly effective in illustrating the cascading risk.

The breakdown of how agentic AI expands the attack surface through APIs and third-party integrations really highlights why supply chain security can’t be an afterthought anymore. It’s a stark reminder that in AI ecosystems, trust is dangerous if it’s not carefully validated at every layer. This piece does a great job of illustrating why organizations need to rethink their risk management strategies in 2026 and beyond.

The analogy of ‘poisoning the water supply’ to describe AI supply chain attacks is incredibly effective in explaining how compromising a single dependency can paralyze an entire agentic system. It’s a critical reminder that as AI agents gain the autonomy to call APIs and execute tools independently, their security perimeter shrinks to their most trusted third-party integrations.

The analogy of ‘poisoning the water supply’ perfectly captures the unique danger of supply chain attacks in agentic AI, where a single compromised dependency can cascade through autonomous decisions. I particularly agree that the shift toward agents calling APIs and plugins autonomously drastically expands the attack surface compared to traditional fixed-instruction software.

The water supply analogy for AI supply chain attacks really clarifies why targeting dependencies is so much more dangerous than direct attacks. I also found the point about agentic AI’s autonomy creating a larger attack surface through constant API calls particularly striking. It emphasizes how crucial it is to verify third-party tools before they ever get access to decision-making capabilities.

The analogy of poisoning a water supply instead of the house itself perfectly illustrates why supply chain attacks are so insidious in agentic AI. Since these systems autonomously call APIs and plugins, a single compromised dependency can turn a trusted tool into a silent threat without immediate human detection. It’s clear that as agents become more autonomous, securing the entire ecosystem of dependencies is just as critical as hardening the core model.

This distinction between fixed instructions and the autonomous actions of Agentic AI highlights why we need robust verification for third-party plugins; a single malicious API call could indeed compromise the entire system without the user’s immediate knowledge. The analogy of poisoning the water supply instead of one house perfectly illustrates why these supply chain risks are becoming the fastest-growing threat in our AI ecosystem.